Ready to build better conversations?

Simple to set up. Easy to use. Powerful integrations.

Get free accessReady to build better conversations?

Simple to set up. Easy to use. Powerful integrations.

Get free accessTripCity resolves 87% of their inbound calls with Aircall’s AI Agents. By almost any measure, that's a success story. And yet their team continues to attend our webinars, try out new features, and make changes, test configurations, and watch what shifts.

That's not unusual for teams seeing the strongest results from AI agents. It's actually what strong performance looks like.

The companies getting the most out of AI agents aren't the ones who got it right on the first deployment. They're the ones who built a habit of improvement, treating their AI agent as something to be developed over time rather than handed off and monitored from a distance. Going live was just the beginning; it's the iteration since that's driven the results.

For most businesses, that's where things get harder, and that's what Agent Performance is designed to fix.

Introducing Agent Performance

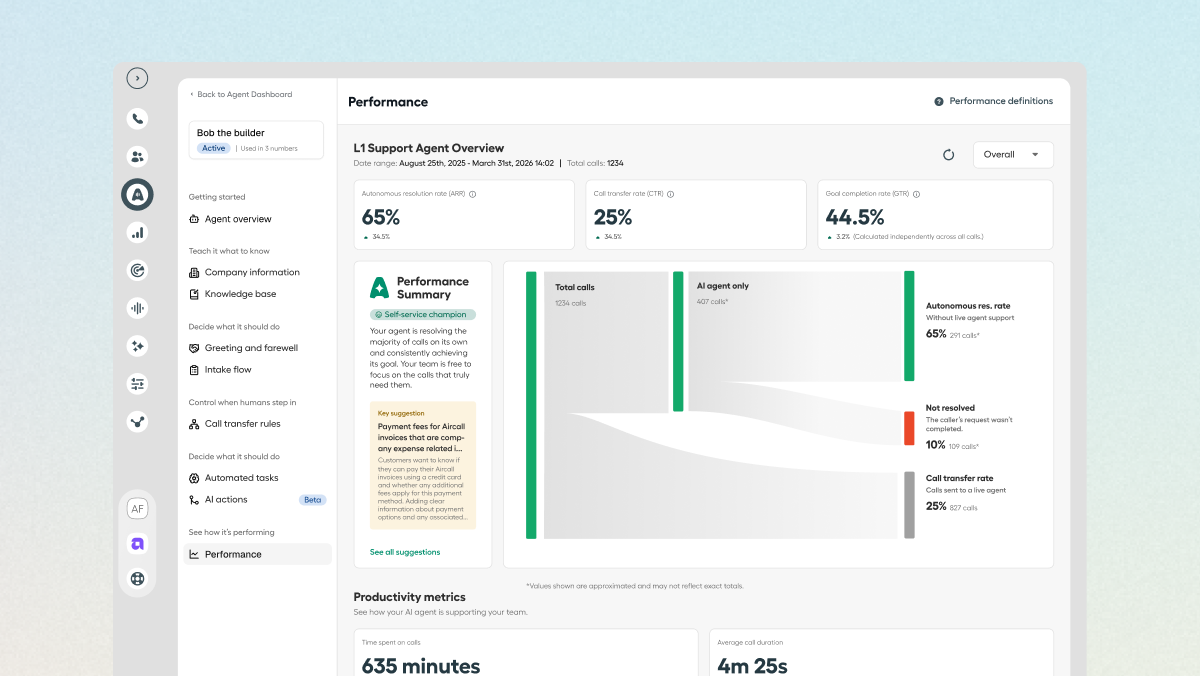

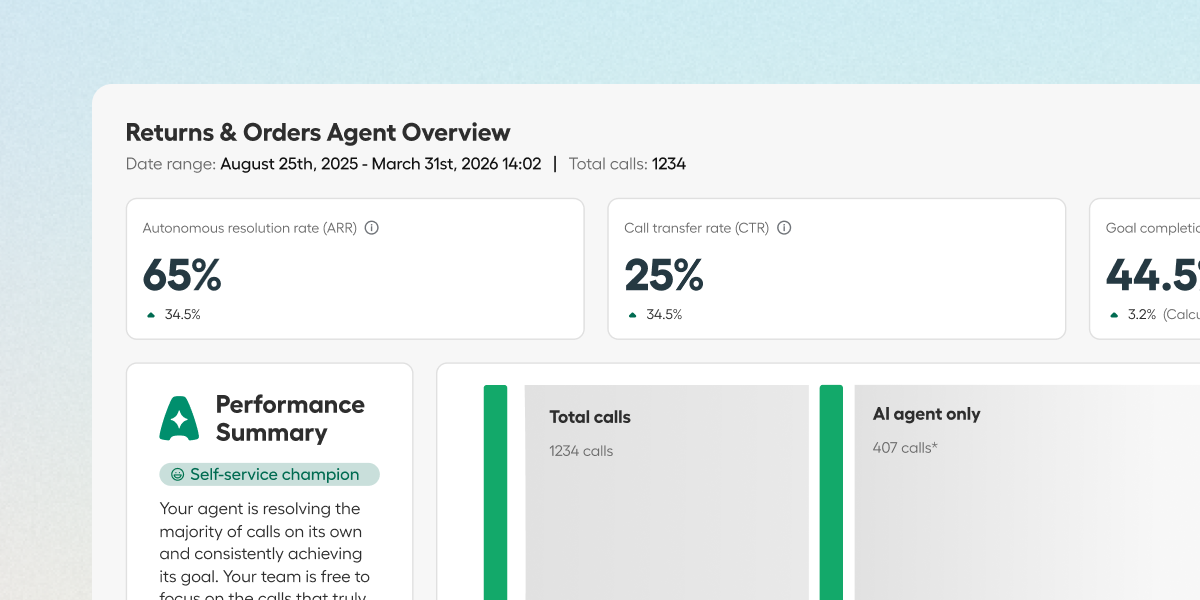

Agent Performance turns your AI agent into a compounding asset, by making performance visible and improvement actionable. From a single dashboard, any admin can see exactly how their agent is performing, surface gaps before customers start feeling them, and act on clear recommendations immediately, with no manual call reviews required.

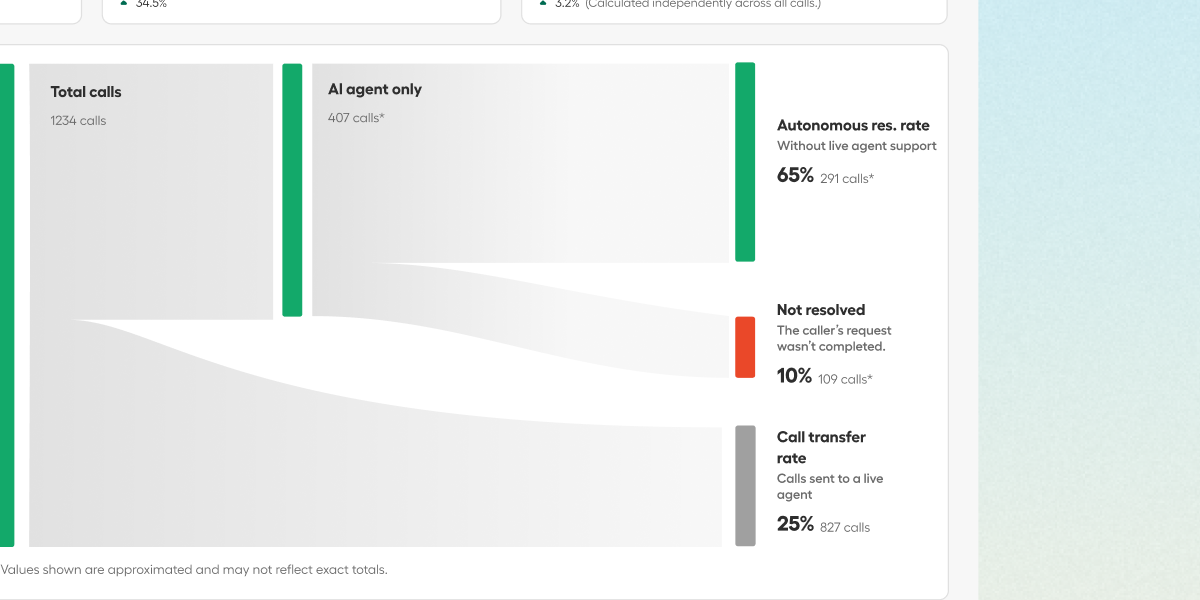

Three core metrics tell you how your agent is performing:

Autonomous Resolution Rate: How many calls your agent handles end-to-end without human intervention.

Goal Completion Rate: Whether it's achieving the specific outcome it was deployed for.

Call Transfer Rate: How often calls escalate to a human, and why.

Every interaction feeds into these numbers, so the longer your agent runs, the clearer your view of what's working and where attention is needed.

The Performance Summary goes one level deeper, surfacing structured insight into where your agent sits in its development, what it's doing well, and where the clearest opportunities for improvement are. Generated from your agent's actual call data, they're specific to your configuration and your conversations.

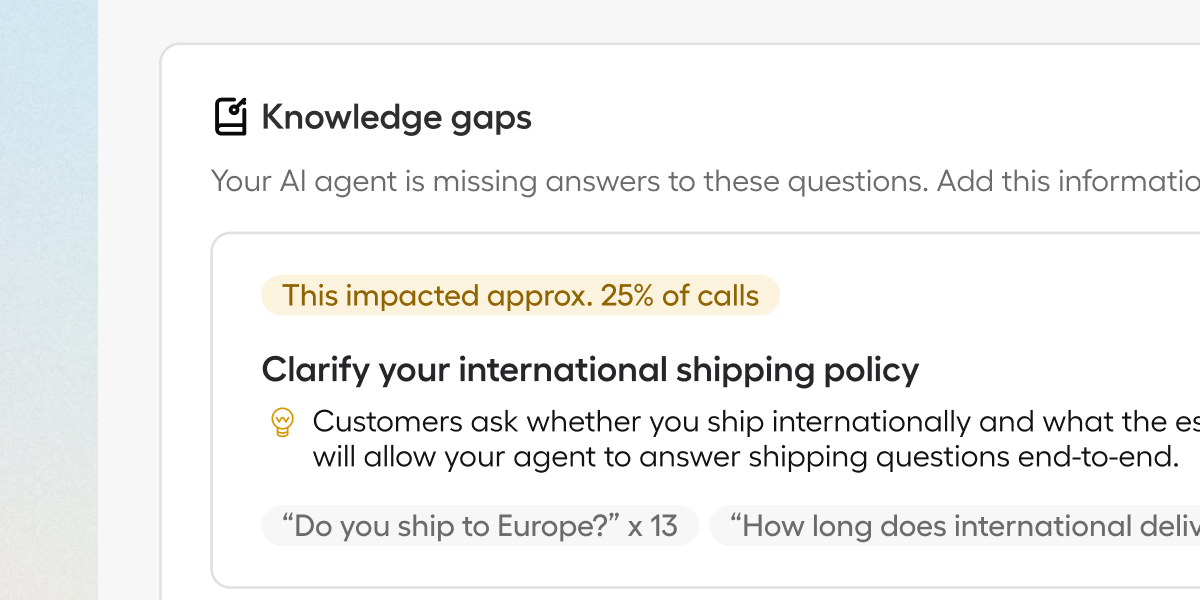

The Recommendations panel is where analysis turns into action. It identifies two categories of gap that can be otherwise difficult to surface without significant manual effort: knowledge gaps, where the agent is hitting questions it can't answer well, and configuration gaps, where the agent's logic isn't matching how customers actually use it. Both come with enough context to act on straight away. Insights start generating once an agent has handled 50 calls, the threshold at which patterns become reliable rather than noise, and the moment your improvement cycle really begins. The goal is to surface gaps before they become patterns, and before your customers start feeling them.

Solving the post-launch plateau

KPMG's Q1 2026 research found that 65% of companies are struggling to scale their AI deployments, a number that doubled in a single quarter. The investment and intent are clearly there, but what's missing is the infrastructure to improve systematically: the data, the feedback loops, the clear view of what's working and what isn't.

MIT's analysis of enterprise AI pilots identified the same pattern: companies invest heavily in getting live, and underinvest in what comes next. Researchers from Stanford reviewed 51 successful AI deployments and found that every one of them was built iteratively, with most teams experiencing failures before finding their footing. The difference between the teams that scaled and the teams that stalled came down to how quickly they could identify what was working, and build on it.

Speed to deployment still matters; the faster you launch, the faster you’ll reach the learning phase. And for what it's worth, it still surprises me as a Product Marketer when I hear about simple AI agent deployments taking weeks and requiring expensive consultants to get off the ground. Aircall's position has always been that if we're selling you a tool, we're also responsible for making sure you can actually use it—which is why our AI agents are designed to be set up quickly, self-service, and our Forward Deployed Engineers are available for more complex workflows at no extra cost. But deployment, however smooth, is still the first move. Too many AI agent platforms make it easy to go live and hard to improve. Agent Performance is how any customer, not just the ones with the time and resources to review and iterate manually, can build an agent that keeps getting smarter, handling more, and compounding in value over time.

Conclusion

The businesses seeing the strongest results from AI aren't operating differently because they have more resources or bigger teams. They're operating differently because they understand that deployment is just the beginning, and that real results come from the iteration that follows. That discipline of continuous improvement is what turns an AI agent into something that compounds in value with every call it handles.

Aircall's goal, from the beginning, has been to give any small business access to what used to require technical resources and weeks of setup time: a calling platform built for self-service, integrations that work straight out of the box, analytics that don't need a data team, and AI agents that anyone can build and deploy in minutes. Agent Performance extends that commitment into the improvement cycle, and it's one we'll be continuously building on over 2026—stay tuned!

Published on May 6, 2026.